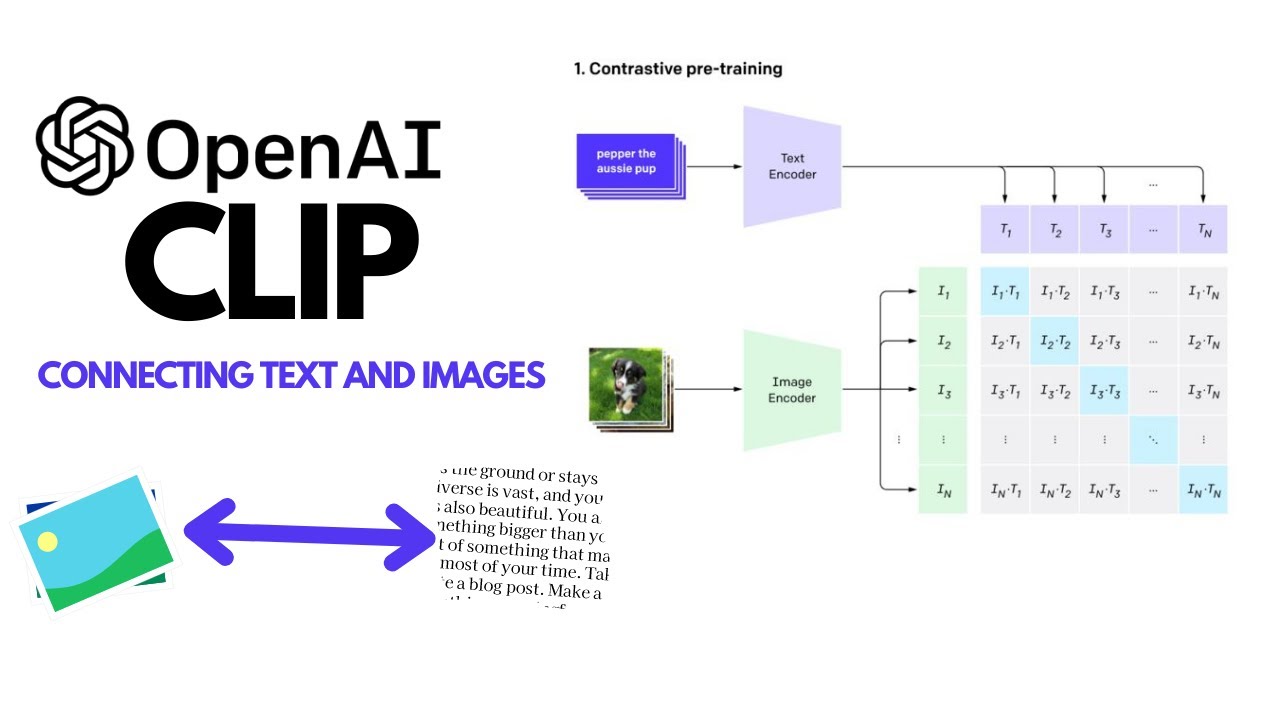

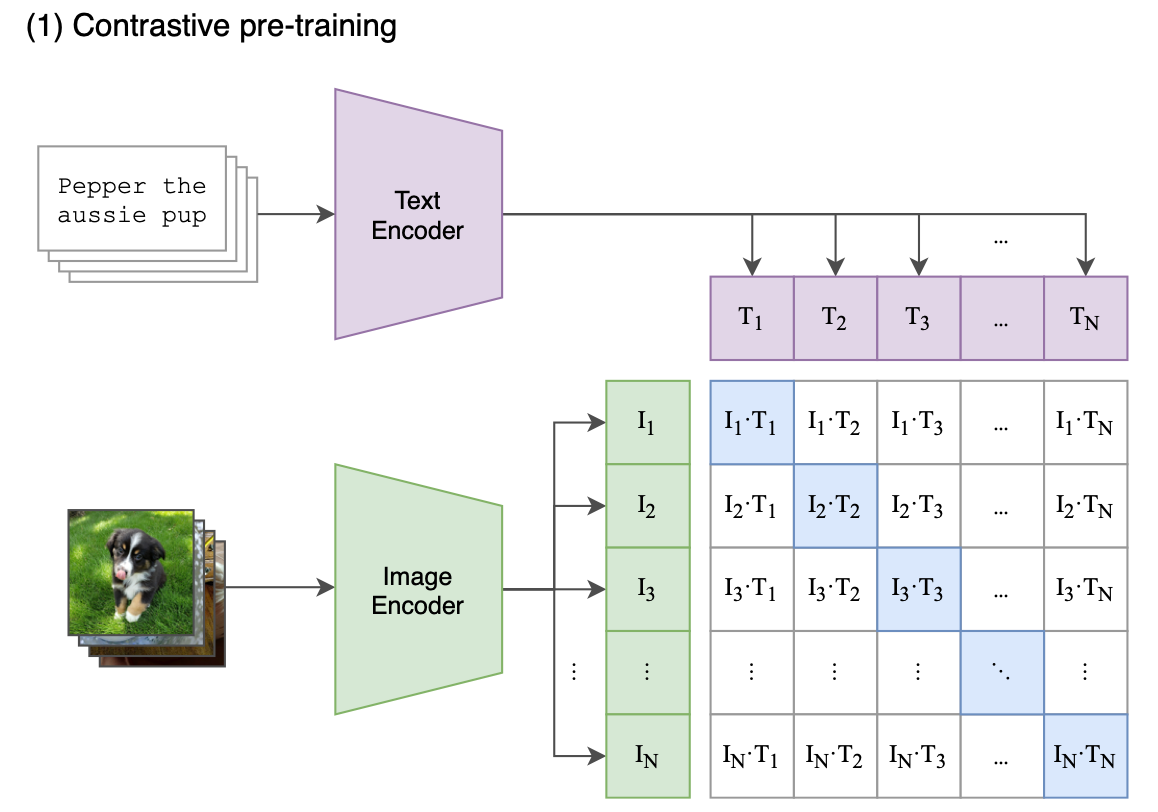

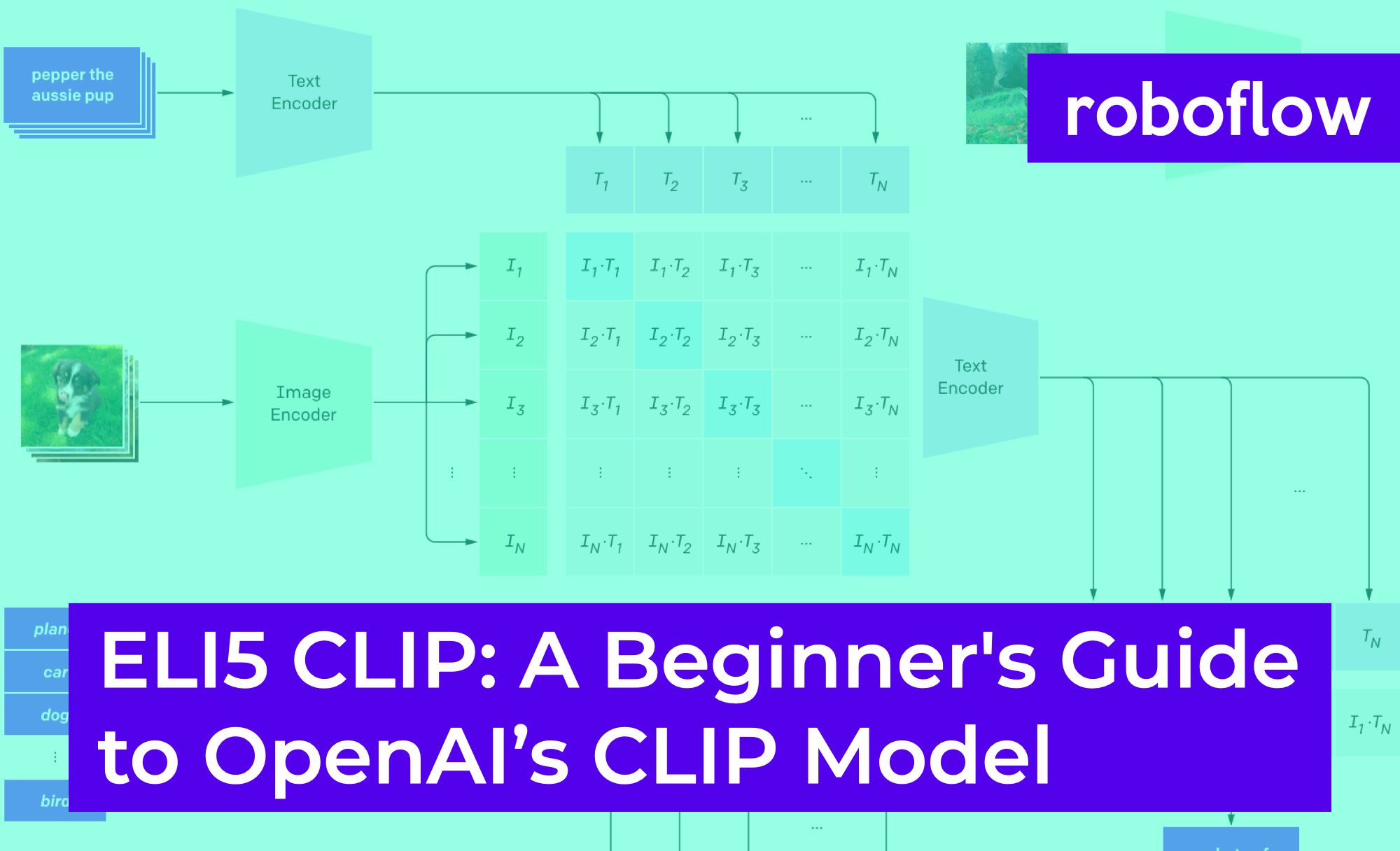

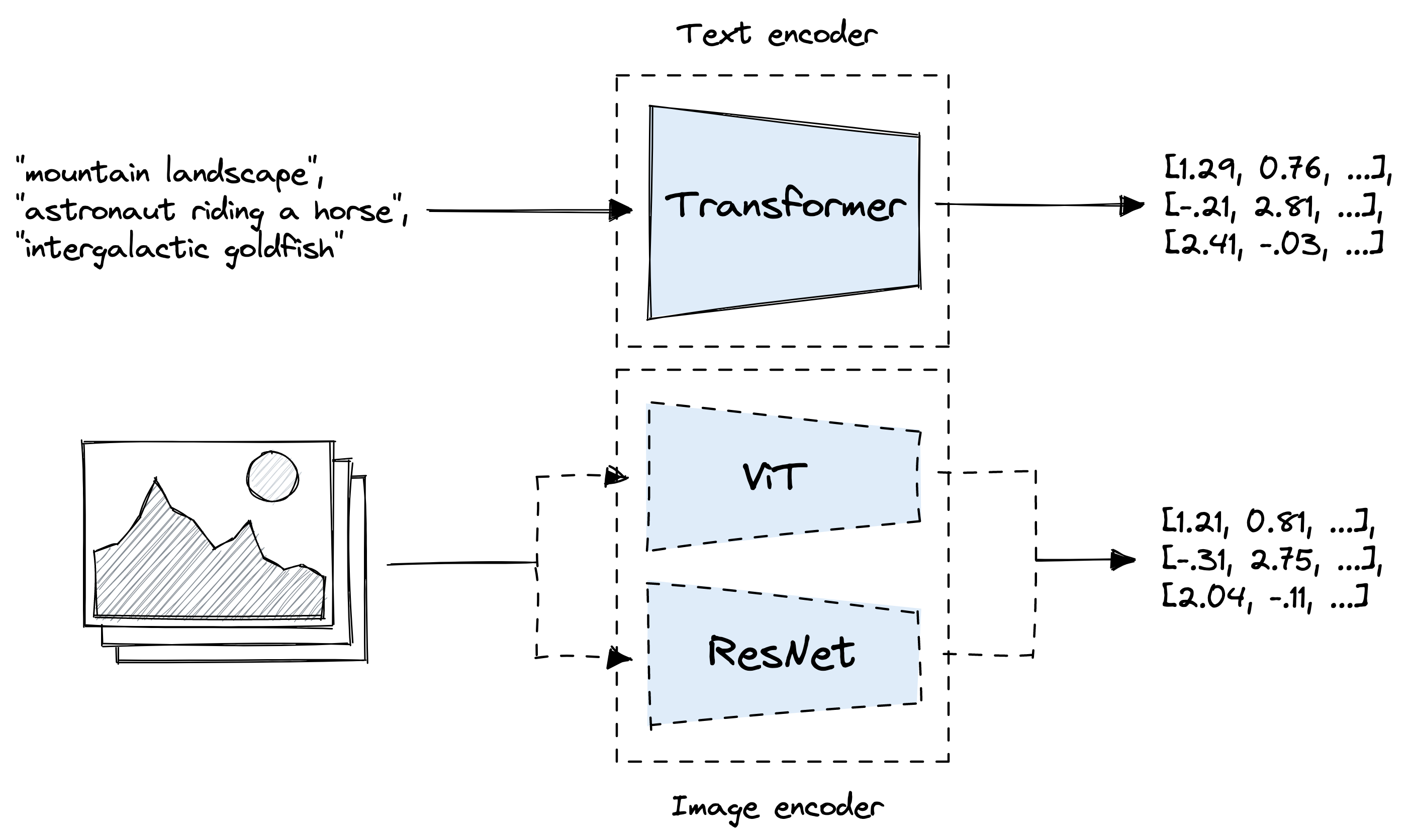

GitHub - openai/CLIP: CLIP (Contrastive Language-Image Pretraining), Predict the most relevant text snippet given an image

Collaborative Learning in Practice (CLiP) in a London maternity ward-a qualitative pilot study - ScienceDirect

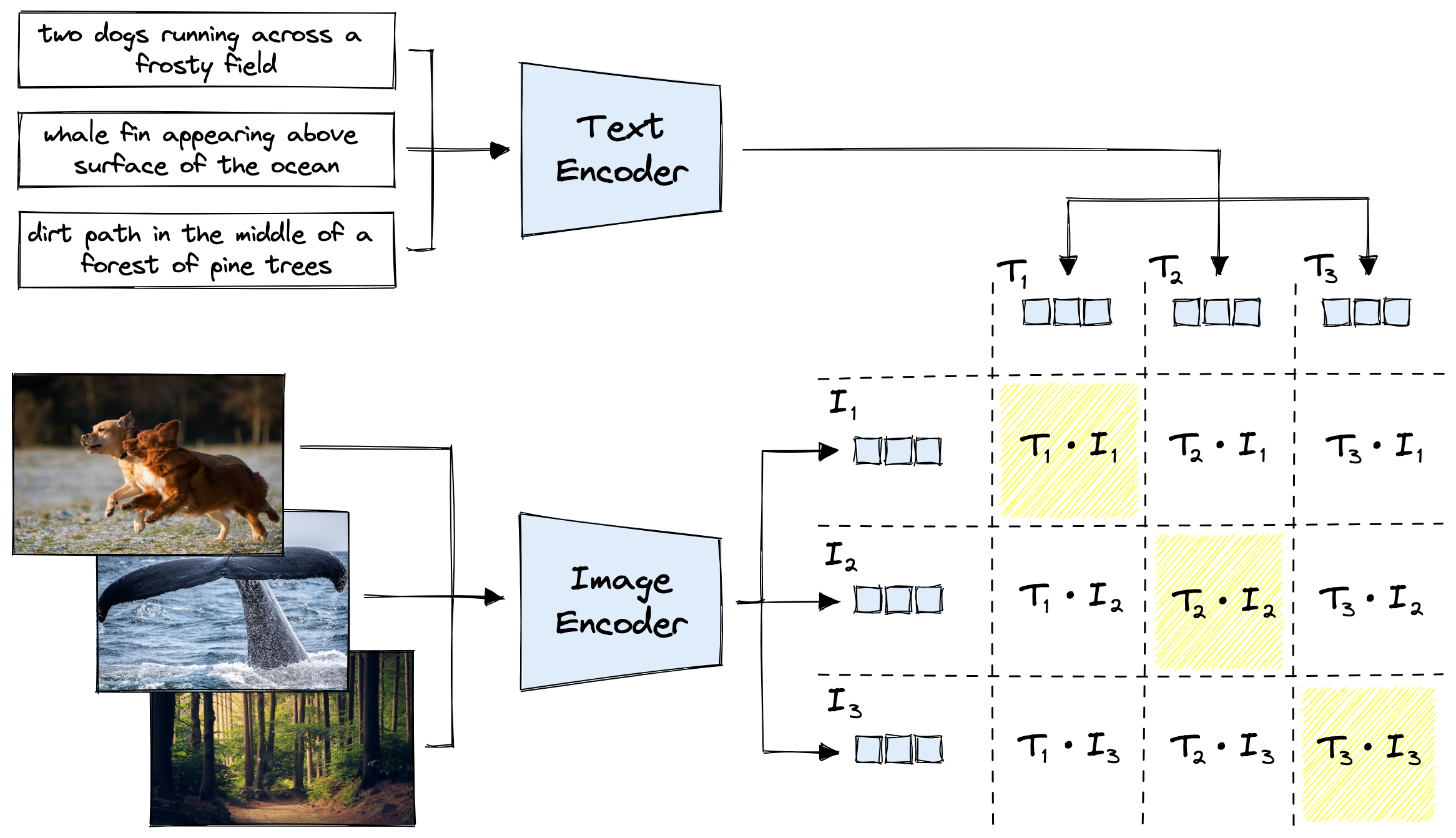

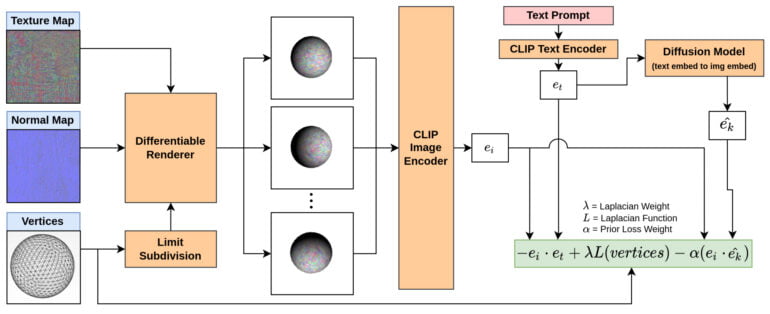

Process diagram of the CLIP model for our task. This figure is created... | Download Scientific Diagram

:max_bytes(150000):strip_icc()/111020_claw_clip_bella_social-2000-cd98eb736caa4efba618fa4661c26fdf.jpg)